This would be Class 4 of of our Data Science Series and in this class we would complete the remaining topics on Data Preprocessing and Data Cleaning. You can get Part 1 here.

The following are covered here

- Numerical and Categorical Values Conversion

- Data Binarization

- Data Standardization

- Data Labelling and Encoding

- Data Splitting – Feature and Class; Train & Test

1. Numerical and Categorical Values Conversion

When some values are given as strings or characters, we may have to convert them to numeric values. For example, if you load up the dataset and take a look at the Sex column, you see that it has the values ‘Male’ and ‘Female’. We would need to change this to values 0 and 1.

In this case, we should have Male = 1 and Female 0.

The format to do this conversion is

df.loc[df['colname'] == <value>, 'colname'] = <new_value>

The code above will replace the <value> in the column ‘colname’ with <new_value>

Therefore the code below would convert the ‘Male’ and ‘Female’ values in out Titanic data

titanic_df.loc[titanic_df['sex']=='male', 'sex'] = 1 titanic_df.loc[titanic_df['sex']=='female', 'sex'] = 0

Add or Remove Column

Us the code below to add a column and remove a column

df['new_col'] = None # Add a column df = df.drop(columns = 'new_col', axis = 1) # Drop a column

Exercise – Replace the values in the Embarked column with values 1, 2 3

2. Data Binarization or Thresholding

Just as the name indicates, binarization is a preprocessing technique used to change values in a dataset to binary (0 and 1). This may be achieved by setting a threshold. The values below the threshold are set to 0 while the values below the threshold are set to 1.

Binarization may be needed when logistic regression would have to be performed

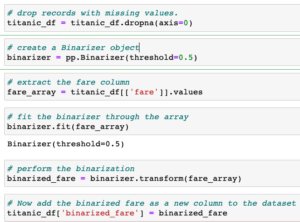

For example, in our Titanic dataset, we can binarize the fare field following the steps below:

I have added it as a screen shot for clarity:

3. Data Standardization

This is also called ‘mean removal and variance scaling’ is a technique used to transform the dataset so that the feature look more like a standard normally distributed data. That is data with a mean of 0 and standard deviation of 1.

Standardization is used in Machine learning estimators such as linear regression and logistic regression where better results are achieved with a normally distributed data. In Python we use the StandardScaler from sklearn.

This work the same as MinMaxScaler from Part 1.

Exercise – Import the Wine dataset. Perform Standardization on the Proline column of the wine dataset.

4. Data Labelling and Encoding

This is an automated way of performing Numerical and Categorical values conversion which we covered in section 1 above. This means that instead of using string labels for the data (like ‘Male’ and ‘Female’), we encode the values into numeric values or number labels. So if theres are n labels in the dataset, they would be encoded in 0 to n-1. The drawback of this method is that you don’t have control over the labels that are assigned.

Sklearn provides a module LabelEncoder() which we can use to perform data labelling.

The code below extracts the home.dest column, encodes it and adds it as a new column in the dataset. See the video for more explanation

encoder = pp.LabelEncoder() home_array = titanic_df[['home.dest']].values encoder.fit(home_array.ravel()) # ravel() converts 1d vector to array home_array_encoded = encoder.transform(home_array) titanic_df['home.dest.encoded'] = home_array_encoded

5. Data Splitting – Features and Class; Train & Test

This is use mostly when we apply supervised learning algorithms to our dataset. Supervised learning is just a ‘fancy word’ for classification and regression!

Splitting into Features and Class

Dataset used for classification is normally has two parts: the features (X) and the class (Y). The idea is that the class can be deduced based on the feature. In the case of the Titanic Dataset, we would like to determine who survived. Therefore, the survived column is the class while every other columns are the features. The code below would split the data in X (features) and Y(class).

Y = titanic_df[['survived']] X = titanic_df.drop(columns = 'survived', axis=1)

Splitting into Train and Test

In supervised learning, you will have to split your data into two parts: training dataset and test dataset. The training dataset is used to train the model while the test dataset is used to test the performance of the model on new data.

In the code below, I generate a dataset of 1000 records, then perform train-test split to split the data into 70% training set and 30% test dataset.

# split a dataset into train and test sets from sklearn.datasets import make_blobs from sklearn.model_selection import train_test_split # Generate dataset X, y = make_blobs(n_samples=1000) # split into train and test sets X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.30) print(X_train.shape, X_test.shape, y_train.shape, y_test.shape)